In memory of James Reason (1938–2025), the influential psychologist behind the Swiss cheese model (SCM),1–3 who passed away on February 5, 2025, we honor his legacy by acknowledging how his work has shaped patient safety. Reason’s pioneering insights have profoundly advanced our understanding of human error and systemic safety management across high-risk domains,2–5 including radiation therapy.6 This brief tribute highlights the continued relevance and application of Reason’s concepts by examining their critical role in analyzing radiation therapy incidents.6

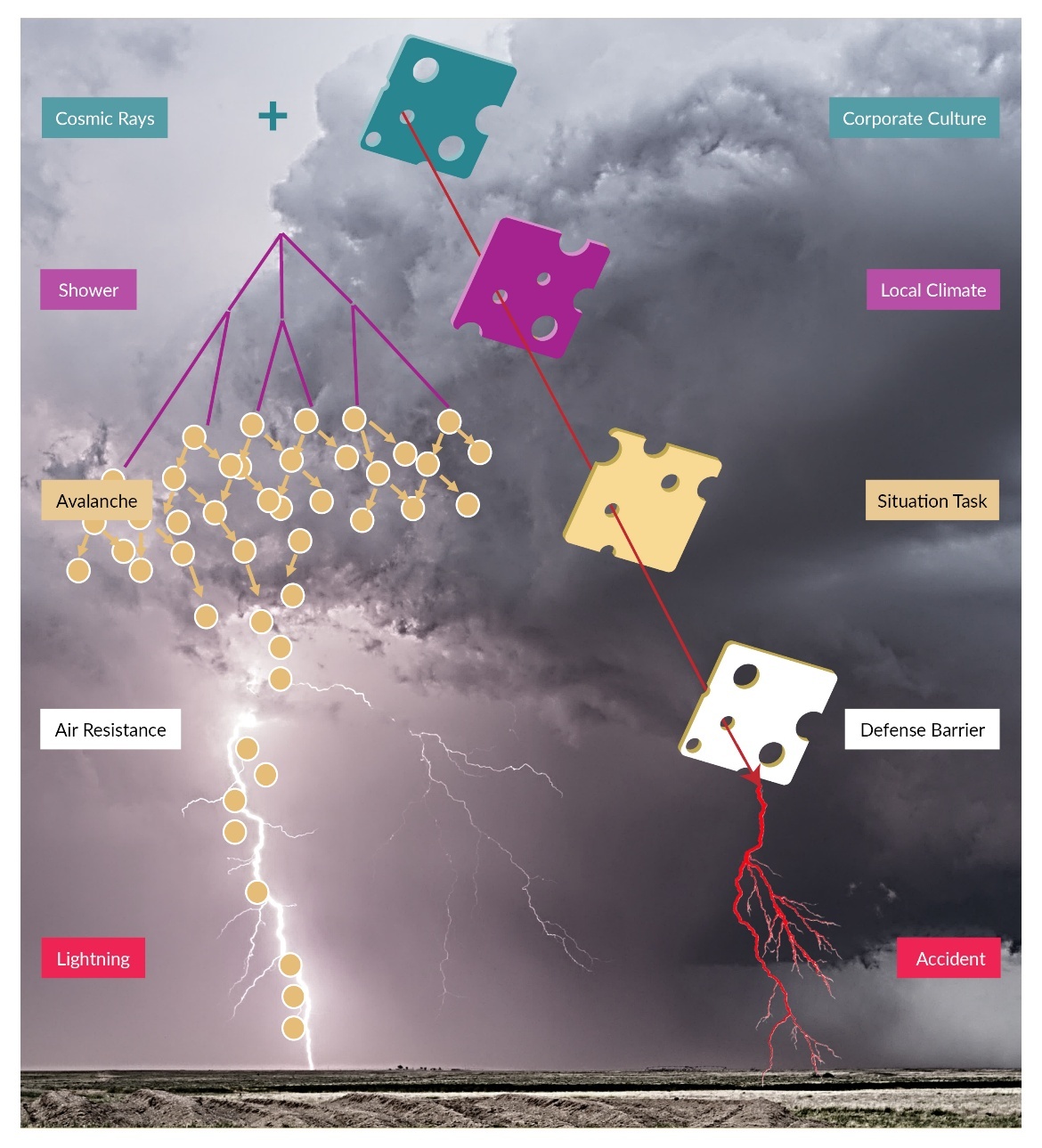

The SCM is a conceptual framework illustrating how accidents and errors occur in complex systems.3 The model uses the metaphor of slices of Swiss cheese, each standing for a layer of defense against errors. The holes in the cheese slices symbolize weaknesses or vulnerabilities within each layer. When these holes align across multiple layers, they create a pathway for errors to reach the patient, resulting in events. In Reason’s memory, we dedicate an exploration of the physics behind lightning formation as an analogy to illuminate and honor his pioneering development of the SCM (Figure 1).

Despite the electric field during thunderstorms being insufficient alone to ionize the air for initiating a breakdown, recent theories suggest a more complex mechanism.7 The process begins invisibly, hundreds of miles above the ground, with cosmic rays triggering cascades of energetic electrons. Cosmic rays constantly hit the Earth’s atmosphere at a rate of about one particle per square centimeter per second. The resistance of air prevents the electrons from creating an avalanche. However, the cascades, combined with environmental conditions, such as electric fields, can evolve into electron avalanches, ultimately creating conductive channels through the resisting air that lead to lightning.

Similarly, organizational influences act like invisible cosmic rays, subtly initiating changes high in the hierarchy (Reason calls them latent errors). These influences cascade downward through supervisory layers, analogous to generating and multiplying high-energy electrons. Preconditions for unsafe acts quietly build up beneath the surface, like an electron avalanche, intensifying vulnerabilities. Like air resistance, employees’ resilience and adaptability constantly absorb imperfection and error cascades in the environment and resist the errors’ propagation. However, when environmental conditions, akin to an electric field reaching a critical threshold, overwhelm the front line, latent errors push active errors to manifest, leading to visible incidents. This analogy highlights James Reason’s fundamental insight: incidents rarely stem from isolated mistakes but arise from complex interactions between latent conditions and triggering events.

According to Reason’s model, the chain of events leading to an incident starts long before a patient arrives for consultation. An active error occurring at the front line is insufficient to cause events; instead, multiple latent conditions, such as corporate culture, local climate, and situational factors, set the stage.5 For example, an extended rigid policy, inappropriate purchases, schedule pressure, a noisy environment, implementing automation without proper assessment, etc., are preconditions. The latent weaknesses, represented as holes in layers of defense, are dynamic, continuously appearing and disappearing as the front line detects and corrects errors throughout daily operations autonomously and adaptively.

Furthermore, Reason recognized that defenses evolve due to organizational changes, technological advancements, and change management practices’ effectiveness (or ineffectiveness). Continuous monitoring and adaptation of these defenses are thus essential.3 Additionally, these latent conditions interact in various manners: a single vulnerability at one organizational level can trigger multiple failures elsewhere (“one-to-many” mapping), or several small factors can converge to form a single critical vulnerability (“many-to-one” mapping).5,8

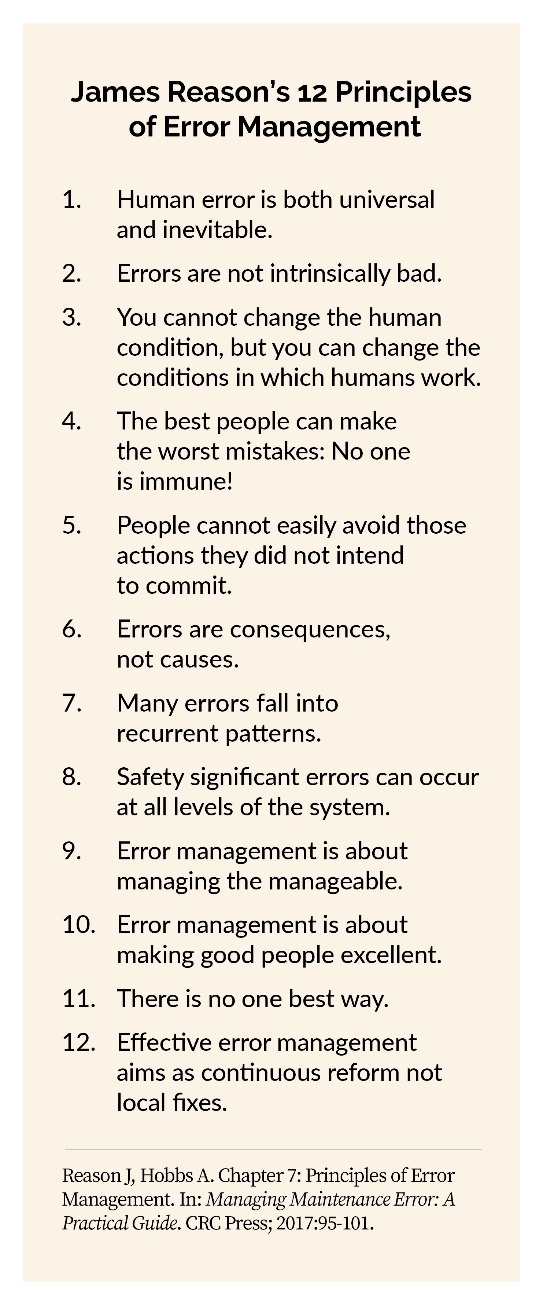

People utilize the SCM differently. Figure 2 illustrates two distinct ways of using the SCM to analyze an incident: a superficial approach, where blame is placed primarily on individuals, and a deeper, systemic approach, aligned with James Reason’s original intention, which considers the broader organizational context and underlying systemic vulnerabilities.8,9

Finally, among many lessons we can learn from Reason, I summarize a few:

-

The absence of accidents is not necessarily a sign of safety.5

-

The best people can make the worst mistakes.4

-

Errors are not causes; they are consequences.4

-

Errors are not intrinsically bad.4 He emphasizes that errors are a natural part of human behavior and can offer valuable insights into the functioning of systems and the cognitive processes of individuals. A learning culture needs errors to learn from them.

-

Effective error management aims at continuous reform rather than local fixes.4 Solutions depend on time and space. Today’s solution could be tomorrow’s problem.

-

Automation can increase the probability of certain kinds of mistakes by making the system and its current state opaque to the people who run it.6 He called this “clumsy automation,” where the automation and “defense in depth” make the workings of the system more mysterious to its human controllers and allow the subtle buildup of latent failures hidden behind high-technology interfaces and within the interdepartmental interstices of complex organizations. Automation can hide latent failures within the system, which may accumulate over time and lead to catastrophic outcomes when combined with other factors. Effective use of artificial intelligence (AI) requires healthcare professionals to train and understand the technology properly. There is a risk of overreliance on AI, where healthcare professionals might trust the system too much and not cross-check its outputs.

James Reason left a valuable legacy for understanding and managing risks in complex systems. Organizations can better use his ideas to enhance risk management strategies and improve patient safety by addressing common misconceptions and incorporating modern interpretations. As we remember James Reason and his contributions, we continue to build on his legacy to create safer healthcare environments for all.

Disclosure

The author declares that they have no relevant or material financial interests.

About the Author

Mohammad Bakhtiari (mbakhtiari@wellspan.org) is a senior medical physicist at WellSpan Radiation Oncology in Chambersburg, Pennsylvania. His expertise includes radiation oncology, healthcare quality, patient safety, AI applications, and systems improvement. His research surrounds practical solutions for complex healthcare systems.